Topic Last Modified: 2011-01-31

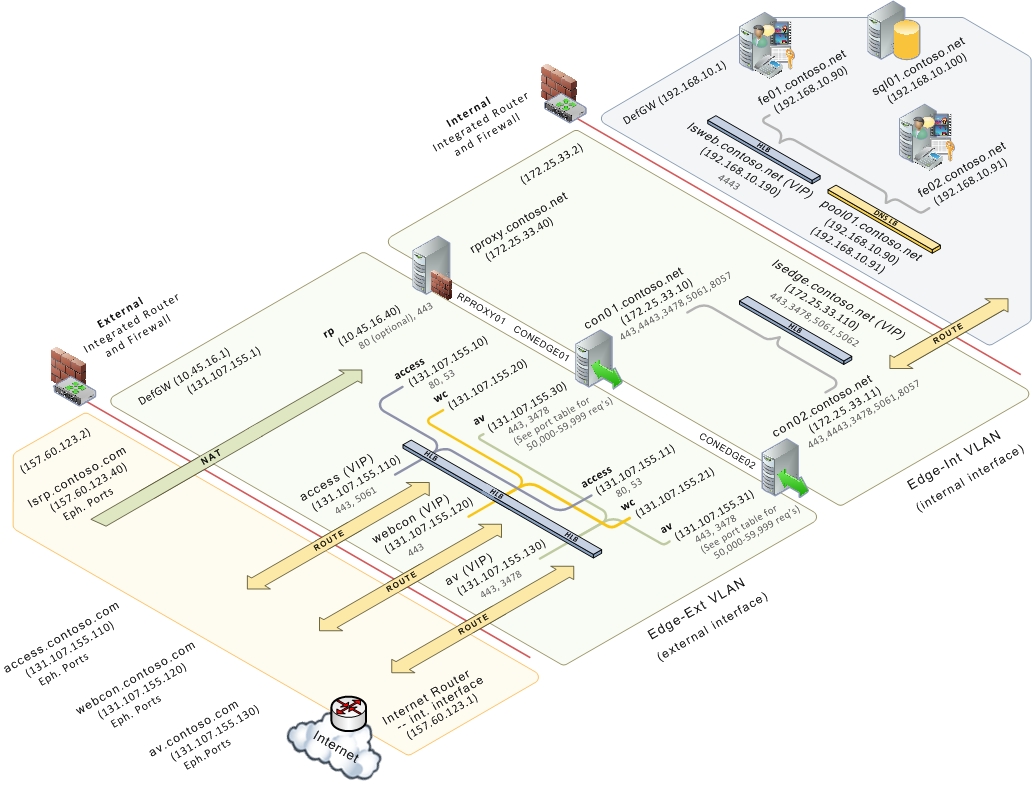

In the Edge Server pool topology, two or more Edge Servers are deployed as a load-balanced pool in the perimeter network of the data center. Hardware load balancing is used for traffic to both the external and internal Edge interfaces.

If your organization requires support for more than 5,000 Access Edge service client connections, 1,000 active Web Conferencing service client connections, or 500 concurrent A/V Edge sessions, and high availability of the Edge Server is important, this topology offers the advantages of scalability and failover support.

For simplicity, the Scaled Consolidated Edge Topology (Hardware Load Balanced) figure does not show any Directors deployed but in a real world production deployment they are recommended. For details about the topology for Directors, see Components and Topologies for Director. The reverse proxy is also not load balanced but if it was, it would require a hardware load balancer. DNS load balancing is not an option for load balancing reverse proxy traffic.

Note: Note: |

|---|

| For clarity, the .com DNS zone represents the external interface for both reverse proxy and consolidated Edge Servers, and the .net DNS zone refers to the internal interfaces. Depending on how your DNS is configured, both interfaces could be in the same zone (for example, in a split-brain DNS configuration). |

Hardware Load Balancer Requirements for A/V Edge

Following are the hardware load balancer requirements for A/V Edge:

- Turn off TCP nagling for both internal and external ports 443.

Nagling is the process of combining several small packets into a

single, larger packet for more efficient transmission.

- Turn off TCP nagling for external port range 50,000 –

59,999.

- Do not use NAT on the internal or external Firewall.

- The Edge internal interface must be on a different network than

the Edge external interface and routing between them must be

disabled.

- The A/V Edge external interface must use publically routable IP

addresses and no NAT or port translation on any of the Edge

external IP addresses.

Hardware Load Balancer Requirements for Web Services

Following are the hardware load balancer requirements for Web Services:

- For external Web Services virtual IPs (VIPs), set cookie-based

persistence on a per port basis for external ports 4443, 8080 on

the hardware load balancer. For Lync Server 2010, cookie-based

persistence means that multiple connections from a single client

are always sent to one server to maintain session state. To

configure cookie based persistence the load balancer must decrypt

and re-encrypt SSL traffic. Therefore, any certificate assigned to

the external Web service FQDN must also be assigned the 4443 VIP of

hard load balancer.

- For internal Web Services VIPS, set Source_addr persistence

(internal port 80, 443) on the hardware load balancer. For Lync

Server 2010, source_addr persistence means that multiple

connections coming from a single IP address are always sent to one

server to maintain session state.

- Use TCP idle timeout of 1800 seconds.

- On the firewall between the reverse proxy and the next hop

pool’s hardware load balancer, create a rule to allow https:

traffic on port 4443, from the reverse proxy to the hardware load

balancer. The hardware load balancer must be configured to listen

on ports 80, 443, and 4443.

Hardware Load Balancer Requirements for Reverse Proxy

Following are the hardware load balancer requirements for reverse proxy:

- On the reverse proxy publishing rule for port 4443, set

“Forward host header” to True. This will ensure that the original

URL is forwarded.